LocalAI model gallery list

🖼️ Available 1057 models

Refer to the Model gallery for more information on how to use the models with LocalAI.

You can install models with the CLI command local-ai models install . or by using the WebUI.

step-3.7-flash

**[ModelPage]**: https://static.stepfun.com/blog/step-3.7-flash/ ## 1. Introduction Step 3.7 Flash is a 198B-parameter sparse Mixture-of-Experts (MoE) vision-language model that combines a 196B-parameter language backbone with a 1.8B-parameter vision encoder for native image understanding. Engineered for high-frequency production workloads, it activates approximately 11B parameters per token and delivers a throughput of up to 400 tokens per second. Step 3.7 Flash supports a 256k context window and offers three selectable reasoning levels (low, medium, and high) so developers can easily balance speed, cost, and cognitive depth. We built Step 3.7 Flash for developers who need to scale agentic workflows that combine perception, search, and reasoning. It is designed to handle intensive tasks such as parsing massive financial reports in one pass, running multi-step search loops with cross-source verification, or operating concurrent coding agents in high-throughput pipelines. ## 2. Capabilities & Performance ### Multimodal Perception and Verification ...

lfm2.5-8b-a1b

Try LFM • Docs • LEAP • Discord # LFM2.5-8B-A1B LFM2.5 is a new family of hybrid models designed for on-device deployment. It builds on the LFM2 architecture with extended pre-training and reinforcement learning. - **On-device personal assistant**: Designed to power real-life applications, chaining tool calls, and following complex instructions on all devices. - **Compressed performance**: Competitive with much larger dense and MoE models on instruction following and agentic tasks. - **Unmatched throughput**: Fastest in its size class on both CPU and GPU inference, with day-one support for llama.cpp, MLX, vLLM, and SGLang. Find more information about LFM2.5-8B-A1B in our blog post. **AA-Omniscience Index (higher is better) rewards correct answers and penalizes hallucinations. Scores range from -100 to 100. See more results on Artificial Analysis.* ## 🗒️ Model Details LFM2.5-8B-A1B is a general-purpose text-only model with the following features: ...

qwopus3.5-9b-coder-mtp

# 🌟 Qwopus3.5-9B-v3.5 ## 💡 Model Overview & v3.5 Design Qwopus3.5-9B-v3.5 is a **data-scaled continuation** of the Qwopus3.5-9B-v3 model. The training data in v3.5 is expanded to cover a broader range of domains, including mathematics, programming, puzzle-solving, multilingual dialogue, instruction-following, multi-turn interactions, and STEM-related tasks. Qwopus3.5-9B-v3.5 is a reasoning-enhanced model based on **Qwen3.5-9B**, designed for: - 🧩 Structured reasoning - 🔧 Tool-augmented workflows - 🔁 Multi-step agentic tasks - ⚡ Token-efficient inference Compared with Qwopus3.5-9B-v3, **3.5 version does not introduce a new architecture, RL stage, or template redesign**. This version is trained with approximately **2× more SFT data**. ## 🎯 Motivation & Generalization Insight The motivation behind v3.5 comes from a simple observation: > This work is motivated by the hypothesis that scaling high-quality SFT data may further enhance the generalization ability of large language models. In earlier Qwopus3.5 experiments, structured reasoning was observed to improve both **accuracy and efficiency**: ...

qwopus3.6-27b-v2-mtp

🪐 Qwopus3.6-27B-v2-MTP MTP Release Multi-Token Prediction reasoning model fine-tuned from Qwen3.6-27B 🧬 Trace Inversion & Negentropy 🧠 27B Parameters ⚡ Speculative Decoding 🛠️ Coding / DevOps / Math 💡 What is Qwopus3.6-27B-v2-MTP? 🪐 Qwopus3.6-27B-v2-MTP is a speed-oriented reasoning release built on top of Qwen3.6-27B. It keeps the Qwopus line's focus on reconstructed reasoning traces, coding discipline, DevOps procedures, and mathematical derivations, while adding Multi-Token Prediction for faster generation. The goal is simple: preserve the depth and structure of a 27B reasoning model while making real interactive use noticeably faster. ⚡ MTP DecodingAuxiliary future-token prediction improves throughput on long reasoning, code, math, and strict-format prompts. 🧩 Structured ReasoningInherits the Qwopus training recipe built around reconstructed step-by-step reasoning trajectories. 🧪 GB10 TestedValidated on a 30-question local benchmark across Logic, Coding, DevOps, Math, and Edge tasks. 🚀 Practical SpeedDesigned for workflows where strong answers matter, but waiting several extra minutes per task does not. ...

qwen3.6-40b-claude-4.6-opus-deckard-heretic-uncensored-thinking-neo-code-di-imatrix-max

The Qwen 3.5 version (also 40B) got 181 likes+ This version uses the new Qwen 3.6 27B arch (which exceeds even Qwen's own 398B model). WARNING: This model has character and intelligence. It will take no prisoners. It will give no quarter. Uncensored, Unfiltered and boldly confident. Not even remotely "SFW", if you ask it for NSFW content. And it is wickedly smart too - exceeding the base model in 6 out of 7 benchmarks. Qwen3.6-40B-Claude-4.6-Opus-Deckard-Heretic-Uncensored-Thinking 40 billion parameters (dense, not moe) expanded from 27B Qwen 3.6, then trained on Claude 4.6 Opus High Reasoning dataset via Unsloth on local hardware... but there is much more to the story - in comes DECKARD. 96 layers, 1275 Tensors. (50% more than base model of 27B) Features variable length reasoning ; less complex = shorter, longer for more complex. Model performance has increased dramatically. And it has character too. A lot of character. No censorship, no nanny. (via Heretic) And it is very, very smart. ...

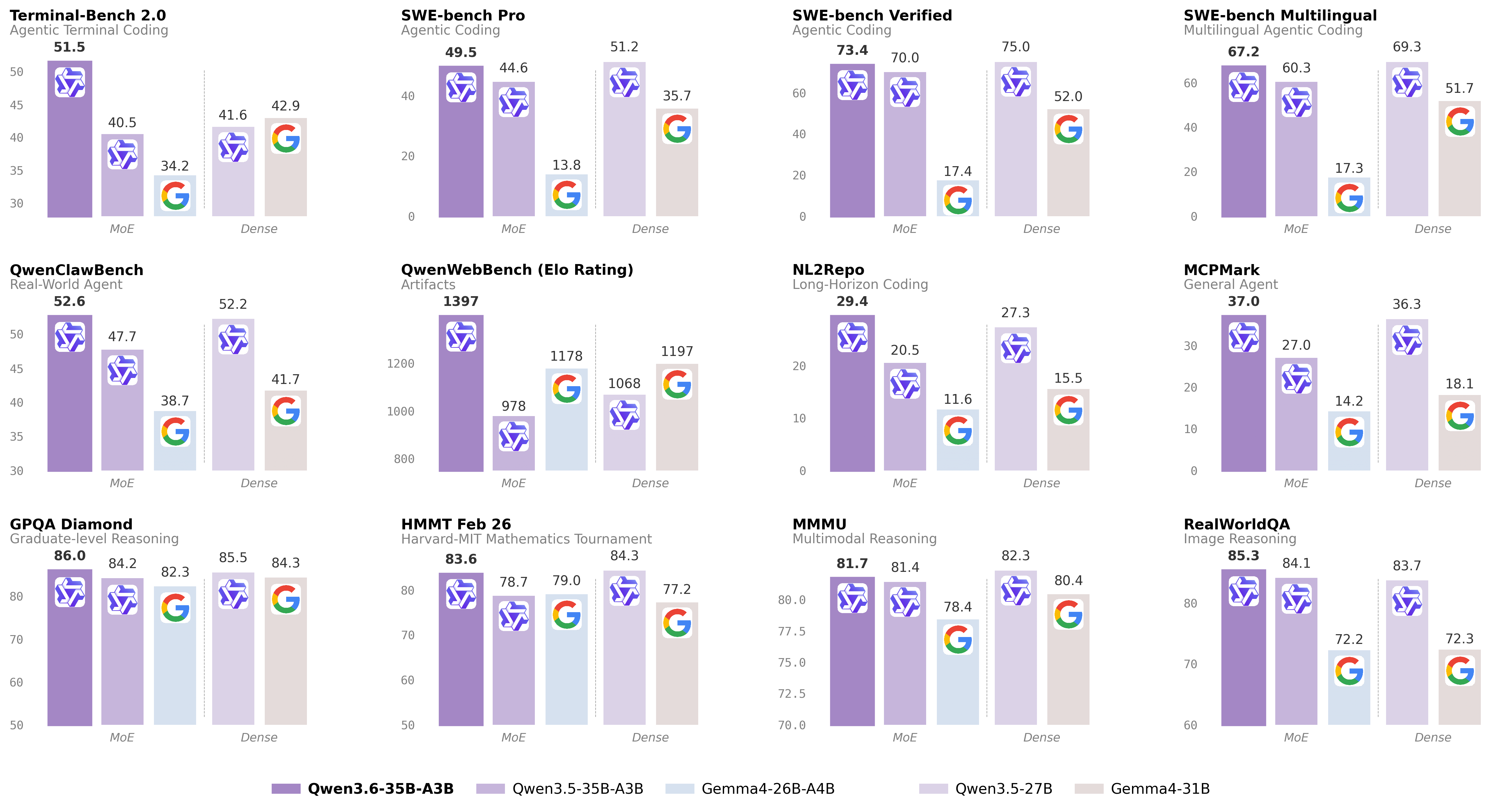

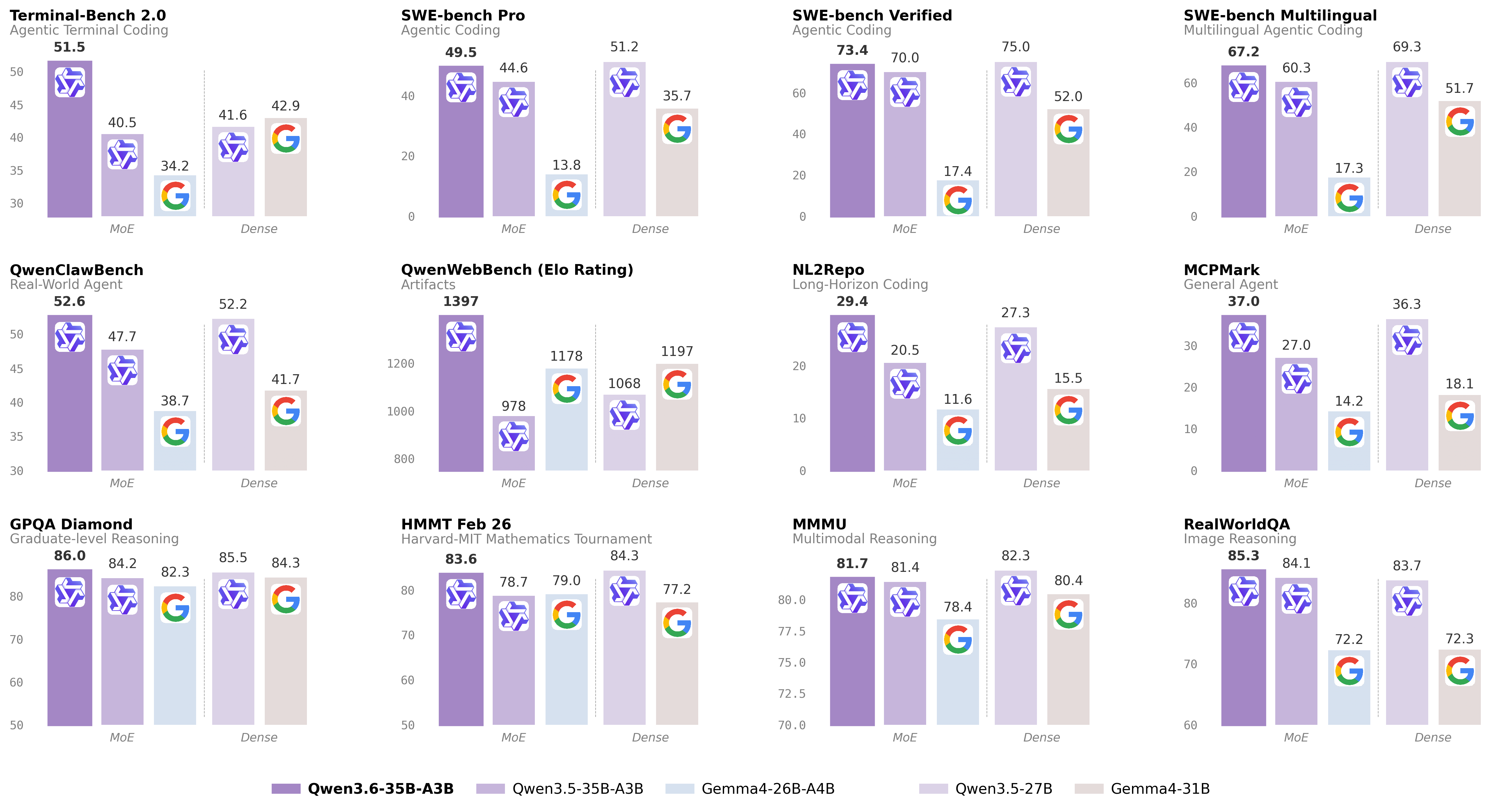

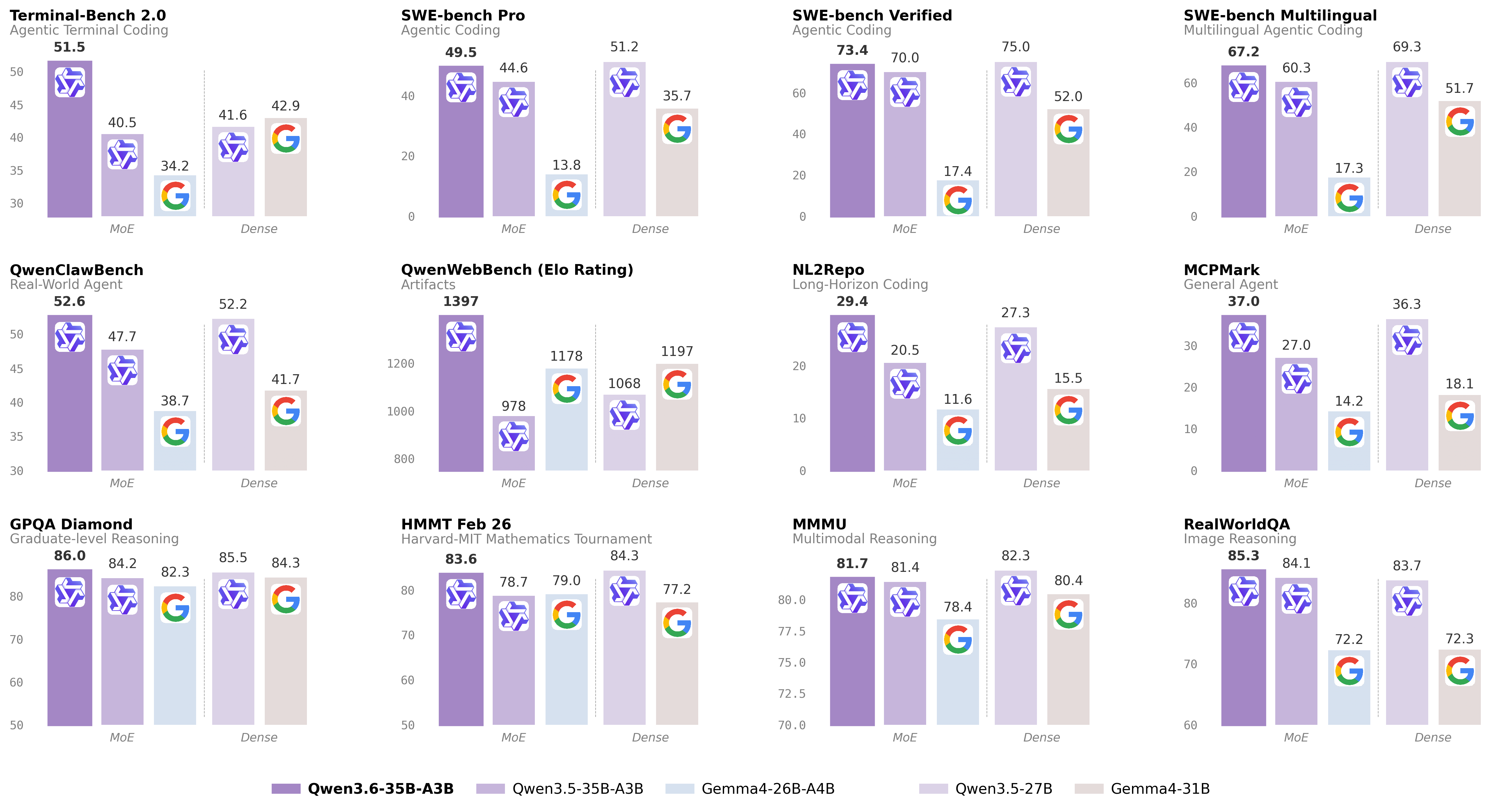

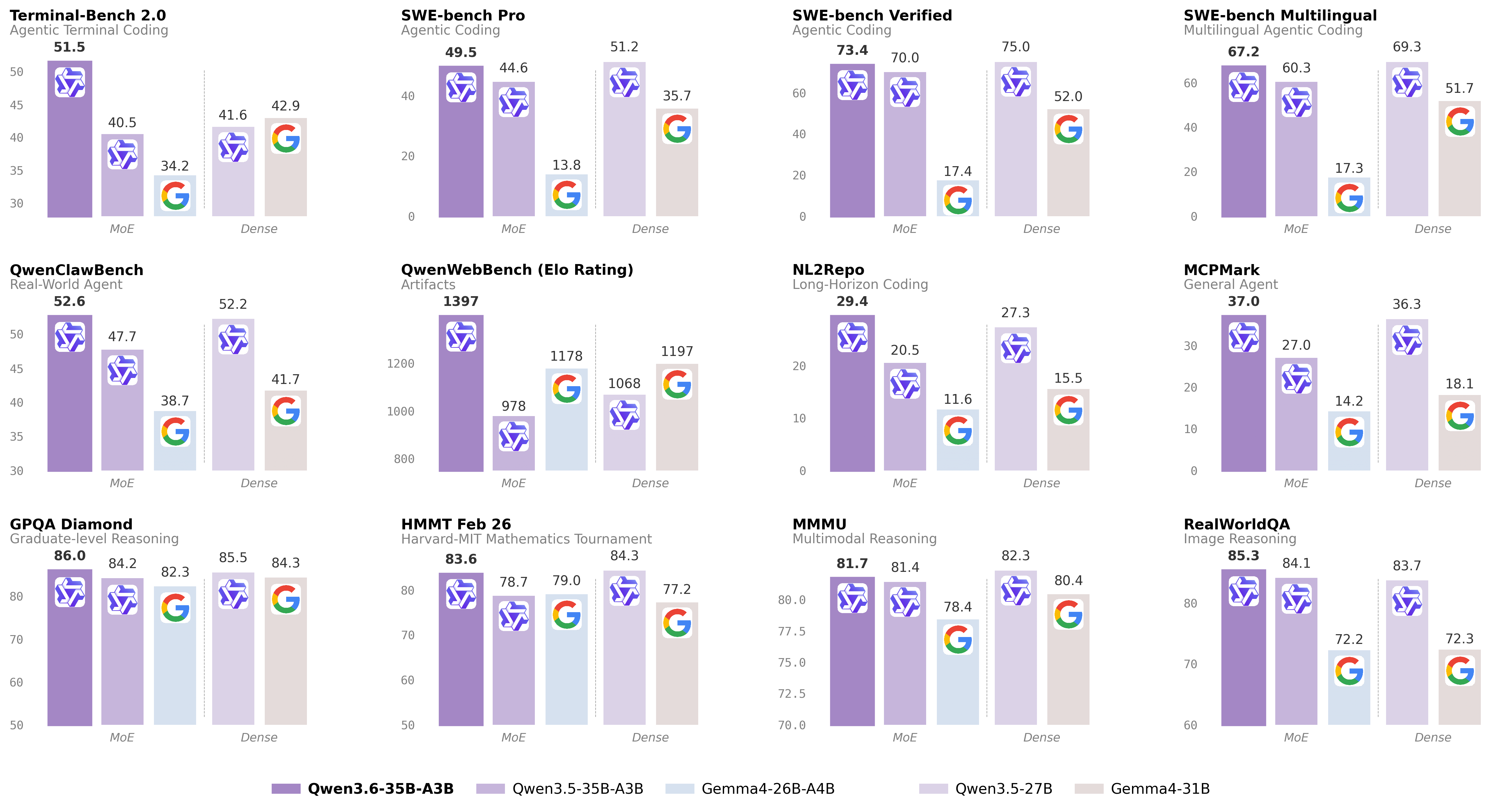

qwopus3.6-35b-a3b-v1

# Qwen3.6-35B-A3B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. ## Qwen3.6 Highlights This release delivers substantial upgrades, particularly in - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. For more details, please refer to our blog post Qwen3.6-35B-A3B. ## Model Overview ...

qwen3.6-27b-heretic-uncensored-finetune-neo-code-di-imatrix-max

Qwen3.6-27B-Heretic2-Uncensored-Finetune-Thinking Yes... fully uncensored AND fine tuned lightly. Freedom and brainpower. Trained on different Heretic base, with different KLD/Refusals. Model fine tune was used to finalize and "firm up" Heretic / uncensored changes. The goal here was light, minor fixes rather than full / heavy fine tune. That being said, the tuning still raised critical metrics. This is Version 2, using "trohrbaugh" Heretic, which has a lower refusal rate, and tuning bumped up the metrics a bit more too. This has also positively impacted "NEO-Coder Di-Matrix" (dual imatrix) GGUF quants as well (vs heretic/non heretic too). https://huggingface.co/DavidAU/Qwen3.6-27B-Heretic-Uncensored-FINETUNE-NEO-CODE-Di-IMatrix-MAX-GGUF ``` IN HOUSE BENCHMARKS [by Nightmedia]: arc-c arc/e boolq hswag obkqa piqa wino Qwen3.6-27B-Heretic2-Uncensored-Finetune-Thinking mxfp8 0.673,0.846,0.905... [instruct mode] Qwen3.6-27B-Heretic-Uncensored-Finetune-Thinking mxfp8 0.669,0.835,0.906,... [instruct mode] BASE UNTUNED MODEL: Qwen3.6-27B HERETIC (by llmfan46) [instruct mode] mxfp8 0.644,0.788,0.902,... ...

qwen3.5-9b-deepseek-v4-flash

# Qwen3.5-9B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Over recent months, we have intensified our focus on developing foundation models that deliver exceptional utility and performance. Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency. ## Qwen3.5 Highlights Qwen3.5 features the following enhancement: - **Unified Vision-Language Foundation**: Early fusion training on multimodal tokens achieves cross-generational parity with Qwen3 and outperforms Qwen3-VL models across reasoning, coding, agents, and visual understanding benchmarks. - **Efficient Hybrid Architecture**: Gated Delta Networks combined with sparse Mixture-of-Experts deliver high-throughput inference with minimal latency and cost overhead. ...

chroma1-hd

Chroma1-HD is an 8.9B-parameter text-to-image foundation model derived from FLUX.1-schnell with reduced parameter count via architectural optimizations. Designed as a base for creators, researchers, and downstream fine-tuning. Recommended inference: 40 steps, CFG 3.0, bfloat16.

nemotron-3-nano-omni-30b-a3b-reasoning-apex

# Model Overview ### Description: NVIDIA Nemotron 3 Nano Omni is a multimodal large language model that unifies video, audio, image, and text understanding to support enterprise-grade Q&A, summarization, transcription, and document intelligence workflows. It extends the Nemotron Nano family with integrated video+speech comprehension, Graphical User Interface (GUI), Optical Character Recognition (OCR), and speech transcription capabilities, enabling end-to-end processing of rich enterprise content such as meeting recordings, M&E assets, training videos, and complex business documents. NVIDIA Nemotron 3 Nano Omni was developed by NVIDIA as part of the Nemotron model family. This model is available for commercial use. This model was improved using Qwen3-VL-30B-A3B-Instruct, Qwen3.5-122B-A10B, Qwen3.5-397B-A17B, Qwen2.5-VL-72B-Instruct, and gpt-oss-120b. For more information, please see the Training Dataset section below. ### License/Terms of Use Governing Terms: Use of this model is governed by the NVIDIA Open Model Agreement ### Deployment Geography: Global ...

carnice-v2-27b

# Carnice-V2-27B for Hermes Agent Carnice-V2-27B is a full merged BF16 SFT of `Qwen/Qwen3.6-27B` for Hermes-style agent traces. This repository contains the standalone merged model weights, not only a LoRA adapter. ## BF16 Transformers Loading Fix The BF16 safetensors were republished with corrected `Qwen3_5ForConditionalGeneration` tensor prefixes. The original merge artifact accidentally serialized an extra Unsloth wrapper prefix, which caused direct HF Transformers loads to report the real weights as unexpected keys and initialize expected layers randomly. GGUF files were not affected because the GGUF conversion path normalized those prefixes. ## Benchmarks The benchmark artifact bundle is included under `benchmarks/`. It contains the rendered graph, extracted `metrics.json`, benchmark scripts, and raw result files used to make the chart. Scope note: the IFEval run is a short `limit=20` A/B smoke benchmark, not an official full leaderboard score. Held-out loss/perplexity is the exact assistant-only training-format validation metric from the SFT script. The raw BFCL two-case smoke files are included for auditability, but they are too small to use as a model-quality claim. ...

kimi-k2.6

🤗 huggingchat | 📰 Tech Blog ## 1. Model Introduction Kimi K2.6 is an open-source, native multimodal agentic model that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration. ### Key Features - **Long-Horizon Coding**: K2.6 achieves significant improvements on complex, end-to-end coding tasks, generalizing robustly across programming languages (Rust, Go, Python) and domains spanning front-end, DevOps, and performance optimization. - **Coding-Driven Design**: K2.6 is capable of transforming simple prompts and visual inputs into production-ready interfaces and lightweight full-stack workflows, generating structured layouts, interactive elements, and rich animations with deliberate aesthetic precision. - **Elevated Agent Swarm**: Scaling horizontally to 300 sub-agents executing 4,000 coordinated steps, K2.6 can dynamically decompose tasks into parallel, domain-specialized subtasks, delivering end-to-end outputs from documents to websites to spreadsheets in a single autonomous run. - **Proactive & Open Orchestration**: For autonomous tasks, K2.6 demonstra ...

qwopus3.6-27b-v1-preview

# Qwen3.6-27B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. ## Qwen3.6 Highlights This release delivers substantial upgrades, particularly in - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. For more details, please refer to our blog post Qwen3.6-27B. ## Model Overview ...

qwopus-glm-18b-merged

# 🪐 Qwen3.5-9B-GLM5.1-Distill-v1 ## 📌 Model Overview **Model Name:** `Jackrong/Qwen3.5-9B-GLM5.1-Distill-v1` **Base Model:** Qwen3.5-9B **Training Type:** Supervised Fine-Tuning (SFT, Distillation) **Parameter Scale:** 9B **Training Framework:** Unsloth This model is a distilled variant of **Qwen3.5-9B**, trained on high-quality reasoning data derived from **GLM-5.1**. The primary goals are to: - Improve **structured reasoning ability** - Enhance **instruction-following consistency** - Activate **latent knowledge via better reasoning structure** ## 📊 Training Data ### Main Dataset - `Jackrong/GLM-5.1-Reasoning-1M-Cleaned` - Cleaned from the original `Kassadin88/GLM-5.1-1000000x` dataset. - Generated from a **GLM-5.1 teacher model** - Approximately **700x** the scale of `Qwen3.5-reasoning-700x` - Training used a **filtered subset**, not the full source dataset. ### Auxiliary Dataset - `Jackrong/Qwen3.5-reasoning-700x` ...

qwen3.6-27b

# Qwen3.6-27B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. ## Qwen3.6 Highlights This release delivers substantial upgrades, particularly in - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. For more details, please refer to our blog post Qwen3.6-27B. ## Model Overview ...

qwen3.6-35b-a3b-claude-4.6-opus-reasoning-distilled

# 🔥 Qwen3.6-35B-A3B-Claude-4.6-Opus-Reasoning-Distilled A reasoning SFT fine-tune of `Qwen/Qwen3.6-35B-A3B` on chain-of-thought (CoT) distillation mostly sourced from Claude Opus 4.6. The goal is to preserve Qwen3.6's strong agentic coding and reasoning base while nudging the model toward structured Claude Opus-style reasoning traces and more stable long-form problem solving. The training path is text-only. The Qwen3.6 base architecture includes a vision encoder, but this fine-tuning run did not train on image or video examples. - **Developed by:** @hesamation - **Base model:** `Qwen/Qwen3.6-35B-A3B` - **License:** apache-2.0 This fine-tuning run is inspired by Jackrong/Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled, including the notebook/training workflow style and Claude Opus reasoning-distillation direction. [](https://x.com/Hesamation) [](https://discord.gg/vtJykN3t) ## Benchmark Results The MMLU-Pro pass used 70 total questions per model: `--limit 5` across 14 MMLU-Pro subjects. Treat this as a smoke/comparative check, not a release-quality full benchmark. ...

qwen3.5-9b-glm5.1-distill-v1

# 🪐 Qwen3.5-9B-GLM5.1-Distill-v1 ## 📌 Model Overview **Model Name:** `Jackrong/Qwen3.5-9B-GLM5.1-Distill-v1` **Base Model:** Qwen3.5-9B **Training Type:** Supervised Fine-Tuning (SFT, Distillation) **Parameter Scale:** 9B **Training Framework:** Unsloth This model is a distilled variant of **Qwen3.5-9B**, trained on high-quality reasoning data derived from **GLM-5.1**. The primary goals are to: - Improve **structured reasoning ability** - Enhance **instruction-following consistency** - Activate **latent knowledge via better reasoning structure** ## 📊 Training Data ### Main Dataset - `Jackrong/GLM-5.1-Reasoning-1M-Cleaned` - Cleaned from the original `Kassadin88/GLM-5.1-1000000x` dataset. - Generated from a **GLM-5.1 teacher model** - Approximately **700x** the scale of `Qwen3.5-reasoning-700x` - Training used a **filtered subset**, not the full source dataset. ### Auxiliary Dataset - `Jackrong/Qwen3.5-reasoning-700x` ...

supergemma4-26b-uncensored-v2

Hugging Face | GitHub | Launch Blog | Documentation License: Apache 2.0 | Authors: Google DeepMind Gemma is a family of open models built by Google DeepMind. Gemma 4 models are multimodal, handling text and image input (with audio supported on small models) and generating text output. This release includes open-weights models in both pre-trained and instruction-tuned variants. Gemma 4 features a context window of up to 256K tokens and maintains multilingual support in over 140 languages. Featuring both Dense and Mixture-of-Experts (MoE) architectures, Gemma 4 is well-suited for tasks like text generation, coding, and reasoning. The models are available in four distinct sizes: **E2B**, **E4B**, **26B A4B**, and **31B**. Their diverse sizes make them deployable in environments ranging from high-end phones to laptops and servers, democratizing access to state-of-the-art AI. Gemma 4 introduces key **capability and architectural advancements**: * **Reasoning** – All models in the family are designed as highly capable reasoners, with configurable thinking modes. ...

qwopus-glm-18b-merged

# 🪐 Qwen3.5-9B-GLM5.1-Distill-v1 ## 📌 Model Overview **Model Name:** `Jackrong/Qwen3.5-9B-GLM5.1-Distill-v1` **Base Model:** Qwen3.5-9B **Training Type:** Supervised Fine-Tuning (SFT, Distillation) **Parameter Scale:** 9B **Training Framework:** Unsloth This model is a distilled variant of **Qwen3.5-9B**, trained on high-quality reasoning data derived from **GLM-5.1**. The primary goals are to: - Improve **structured reasoning ability** - Enhance **instruction-following consistency** - Activate **latent knowledge via better reasoning structure** ## 📊 Training Data ### Main Dataset - `Jackrong/GLM-5.1-Reasoning-1M-Cleaned` - Cleaned from the original `Kassadin88/GLM-5.1-1000000x` dataset. - Generated from a **GLM-5.1 teacher model** - Approximately **700x** the scale of `Qwen3.5-reasoning-700x` - Training used a **filtered subset**, not the full source dataset. ### Auxiliary Dataset - `Jackrong/Qwen3.5-reasoning-700x` ...

qwen3.6-35b-a3b-apex

# Qwen3.6-35B-A3B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. ## Qwen3.6 Highlights This release delivers substantial upgrades, particularly in - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. For more details, please refer to our blog post Qwen3.6-35B-A3B. ## Model Overview ...

qwen3.6-35b-a3b

# Qwen3.6-35B-A3B [](https://chat.qwen.ai) > [!Note] > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. > > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. ## Qwen3.6 Highlights This release delivers substantial upgrades, particularly in - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. For more details, please refer to our blog post Qwen3.6-35B-A3B. ## Model Overview ...

gemma-4-26b-a4b-it-apex

AI model: gemma-4-26b-a4b-it-apex

gemma-4-26b-a4b-it

Google Gemma 4 26B-A4B-IT is an open-source multimodal Mixture-of-Experts model with 26B total parameters and 4B active parameters. It handles text and image input, generating text output, with a 256K context window and support for 140+ languages. The MoE architecture provides strong performance with efficient inference. Well-suited for question answering, summarization, reasoning, and image understanding tasks.

gemma-4-e2b-it

Google Gemma 4 E2B-IT is a lightweight open-source multimodal model with 5B total parameters and 2B effective parameters using selective parameter activation. It handles text and image input, generating text output, with a 256K context window and support for 140+ languages. Optimized for efficient execution on low-resource devices including mobile and laptops.

gemma-4-e4b-it

Google Gemma 4 E4B-IT is an open-source multimodal model with 8B total parameters and 4B effective parameters using selective parameter activation. It handles text and image input, generating text output, with a 256K context window and support for 140+ languages. Offers a good balance of performance and efficiency for deployment on consumer hardware.

gemma-4-31b-it

Google Gemma 4 31B-IT is the largest dense model in the Gemma 4 family with 31B parameters. It handles text and image input, generating text output, with a 256K context window and support for 140+ languages. Provides the highest quality outputs in the Gemma 4 lineup, well-suited for complex reasoning, summarization, and image understanding tasks.

qwen3.5-35b-a3b-apex

Describe the model in a clear and concise way that can be shared in a model gallery.

qwen_qwen3.5-35b-a3b

Qwen3.5-35B-A3B is a quantized multimodal language model with 35B parameters using an A3B MoE architecture. It supports image-text understanding and chat interactions via llama-cpp backend.

qwen3.5-27b-claude-4.6-opus-reasoning-distilled-heretic-i1

qwen_qwen3.5-0.8b

Qwen 3.5 0.8B parameter model quantized for llama-cpp backend. Supports chat interactions and multimodal image-text inputs.

qwen_qwen3.5-2b

Qwen3.5-2B is a highly efficient, instruction-tuned multilingual language model available in various quantized GGUF formats. Optimized for llama-cpp inference, it supports chat and completion tasks with strong performance on low-RAM hardware. The model is available in multiple quantization levels ranging from Q8_0 to IQ2_M to balance quality and resource usage.

qwen_qwen3.5-4b

Qwen3.5-4B is a multimodal LLM with 4 billion parameters, optimized for chat and vision tasks. This GGUF quantized version enables efficient local inference via llama-cpp backend. Supports both text and image input for enhanced conversational capabilities.

qwen3.5-27b-claude-4.6-opus-reasoning-distilled-i1

Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled-i1-GGUF - A GGUF quantized model optimized for local inference. Specialized for reasoning and chain-of-thought tasks. Based on Qwen 3.5 architecture with enhanced language understanding. Available in multiple quantization levels for various hardware requirements. Distilled from Claude-style reasoning models for enhanced logical reasoning capabilities.

qwen3.5-4b-claude-4.6-opus-reasoning-distilled

Qwen3.5-4B-Claude-4.6-Opus-Reasoning-Distilled-GGUF - A GGUF quantized model optimized for local inference. Specialized for reasoning and chain-of-thought tasks. Based on Qwen 3.5 architecture with enhanced language understanding. Available in multiple quantization levels for various hardware requirements. Distilled from Claude-style reasoning models for enhanced logical reasoning capabilities.

q3.5-bluestar-27b

qwen3.5-9b

qwen3.5-397b-a17b

qwen3.5-27b

qwen3.5-122b-a10b

qwen_qwen3-next-80b-a3b-thinking

nanbeige4.1-3b-q8

Nanbeige4.1-3B is built upon Nanbeige4-3B-Base and represents an enhanced iteration of our previous reasoning model, Nanbeige4-3B-Thinking-2511, achieved through further post-training optimization with supervised fine-tuning (SFT) and reinforcement learning (RL). As a highly competitive open-source model at a small parameter scale, Nanbeige4.1-3B illustrates that compact models can simultaneously achieve robust reasoning, preference alignment, and effective agentic behaviors. Key features: Strong Reasoning: Capable of solving complex, multi-step problems through sustained and coherent reasoning within a single forward pass, reliably producing correct answers on benchmarks like LiveCodeBench-Pro, IMO-Answer-Bench, and AIME 2026 I. Robust Preference Alignment: Outperforms same-scale models (e.g., Qwen3-4B-2507, Nanbeige4-3B-2511) and larger models (e.g., Qwen3-30B-A3B, Qwen3-32B) on Arena-Hard-v2 and Multi-Challenge. Agentic Capability: First general small model to natively support deep-search tasks and sustain complex problem-solving with >500 rounds of tool invocations; excels in benchmarks like xBench-DeepSearch (75), Browse-Comp (39), and others.

nanbeige4.1-3b-q4

Nanbeige4.1-3B is built upon Nanbeige4-3B-Base and represents an enhanced iteration of our previous reasoning model, Nanbeige4-3B-Thinking-2511, achieved through further post-training optimization with supervised fine-tuning (SFT) and reinforcement learning (RL). As a highly competitive open-source model at a small parameter scale, Nanbeige4.1-3B illustrates that compact models can simultaneously achieve robust reasoning, preference alignment, and effective agentic behaviors. Key features: Strong Reasoning: Capable of solving complex, multi-step problems through sustained and coherent reasoning within a single forward pass, reliably producing correct answers on benchmarks like LiveCodeBench-Pro, IMO-Answer-Bench, and AIME 2026 I. Robust Preference Alignment: Outperforms same-scale models (e.g., Qwen3-4B-2507, Nanbeige4-3B-2511) and larger models (e.g., Qwen3-30B-A3B, Qwen3-32B) on Arena-Hard-v2 and Multi-Challenge. Agentic Capability: First general small model to natively support deep-search tasks and sustain complex problem-solving with >500 rounds of tool invocations; excels in benchmarks like xBench-DeepSearch (75), Browse-Comp (39), and others.

nemo-parakeet-tdt-0.6b

NVIDIA NeMo Parakeet TDT 0.6B v3 is an automatic speech recognition (ASR) model from NVIDIA's NeMo toolkit. Parakeet models are state-of-the-art ASR models trained on large-scale English audio data.

voxtral-mini-4b-realtime

Voxtral Mini 4B Realtime is a speech-to-text model from Mistral AI. It is a 4B parameter model optimized for fast, accurate audio transcription with low latency, making it ideal for real-time applications. The model uses the Voxtral architecture for efficient audio processing.

moonshine-tiny

Moonshine Tiny is a lightweight speech-to-text model optimized for fast transcription. It is designed for efficient on-device ASR with high accuracy relative to its size.

whisperx-tiny

WhisperX Tiny is a fast and accurate speech recognition model with speaker diarization capabilities. Built on OpenAI's Whisper with additional features for alignment and speaker segmentation.

omnilingual-0.3b-ctc-q8-sherpa

Omnilingual ASR CTC 300M (int8) is a multilingual automatic speech recognition model supporting 1,600+ languages. Based on Meta's omniASR_CTC_300M architecture (Wav2Vec2 with CTC head), quantized to int8 for efficient inference. Uses the sherpa-onnx backend with ONNX Runtime.

streaming-zipformer-en-sherpa

Streaming English ASR: sherpa-onnx zipformer transducer (int8, chunk-16 left-128). Low-latency real-time transcription with endpoint detection via sherpa-onnx's online recognizer. English-only; for multilingual offline ASR see omnilingual-0.3b-ctc-q8-sherpa.

silero-vad-sherpa

Silero VAD served through the sherpa-onnx backend. Uses the same ONNX weights as the dedicated silero-vad backend, loaded through sherpa-onnx's C VAD API. Pairs with the sherpa-onnx ASR entries for round-trip audio pipelines.

vits-ljs-sherpa

VITS-LJS English single-speaker TTS served through the sherpa-onnx backend. Trained on the LJSpeech corpus at 22.05 kHz. Pairs with the sherpa-onnx ASR entries for round-trip audio pipelines.

voxcpm-1.5

VoxCPM 1.5 is an end-to-end text-to-speech (TTS) model from ModelBest. It features zero-shot voice cloning and high-quality speech synthesis capabilities.

neutts-air

NeuTTS Air is the world's first super-realistic, on-device TTS speech language model with instant voice cloning. Built on a 0.5B LLM backbone, it brings natural-sounding speech, real-time performance, and speaker cloning to local devices.

vllm-omni-z-image-turbo

Z-Image-Turbo via vLLM-Omni - A distilled version of Z-Image optimized for speed with only 8 NFEs. Offers sub-second inference latency on enterprise-grade H800 GPUs and fits within 16GB VRAM. Excels in photorealistic image generation, bilingual text rendering (English & Chinese), and robust instruction adherence.

vllm-omni-wan2.2-t2v

Wan2.2-T2V-A14B via vLLM-Omni - Text-to-video generation model from Wan-AI. Generates high-quality videos from text prompts using a 14B parameter diffusion model.

vllm-omni-wan2.2-i2v

Wan2.2-I2V-A14B via vLLM-Omni - Image-to-video generation model from Wan-AI. Generates high-quality videos from images using a 14B parameter diffusion model.

vllm-omni-qwen3-omni-30b

Qwen3-Omni-30B-A3B-Instruct via vLLM-Omni - A large multimodal model (30B active, 3B activated per token) from Alibaba Qwen team. Supports text, image, audio, and video understanding with text and speech output. Features native multimodal understanding across all modalities.

vllm-omni-qwen3-tts-custom-voice

Qwen3-TTS-12Hz-1.7B-CustomVoice via vLLM-Omni - Text-to-speech model from Alibaba Qwen team with custom voice cloning capabilities. Generates natural-sounding speech with voice personalization.

ace-step-turbo

ACE-Step 1.5 Turbo is a music generation model that can create music from text descriptions, lyrics, or audio samples. Supports both simple text-to-music and advanced music generation with metadata like BPM, key scale, and time signature.

acestep-cpp-turbo

ACE-Step 1.5 Turbo (C++ / GGML) — native C++ music generation from text descriptions and lyrics. Two-stage pipeline: text-to-code (Qwen3 LM) + code-to-audio (DiT-VAE). Stereo 48kHz output. Uses Q8_0 quantized models for a good balance of quality and speed.

acestep-cpp-turbo-4b

ACE-Step 1.5 Turbo (C++ / GGML) with 4B LM — higher quality music generation from text and lyrics. Uses the larger 4B parameter LM for better metadata/code generation. Stereo 48kHz output.

vibevoice-cpp

VibeVoice Realtime 0.5B (C++ / GGML, Q8_0) - native C++ port of Microsoft VibeVoice via the vibevoice-cpp backend. 24kHz mono TTS with voice cloning from a single reference voice prompt. Default voice prompt: en-Carter_man.

vibevoice-cpp-asr

VibeVoice ASR 7B (C++ / GGML, Q4_K) - long-form speech-to-text with speaker diarization. Returns per-speaker JSON segments with start/end timestamps. English-only. ~10 GB download.

qwen3-tts-cpp

Qwen3-TTS 0.6B (C++ / GGML) — native C++ text-to-speech from text input. Generates 24kHz mono audio. Supports 10 languages (en, zh, ja, ko, de, fr, es, it, pt, ru). Uses F16 GGUF models (~2 GB total).

qwen3-tts-cpp-customvoice

Qwen3-TTS 0.6B Custom Voice (C++ / GGML) — text-to-speech with voice cloning support. Generates 24kHz mono audio with optional reference audio for voice cloning via ECAPA-TDNN speaker embeddings. Supports 10 languages (en, zh, ja, ko, de, fr, es, it, pt, ru).

qwen3-coder-next-mxfp4_moe

The model is a quantized version of **Qwen/Qwen3-Coder-Next** (base model) using the **MXFP4** quantization scheme. It is optimized for efficiency while retaining performance, suitable for deployment in applications requiring lightweight inference. The quantized version is tailored for specific tasks, with parameters like temperature=1.0 and top_p=0.95 recommended for generation.

deepseek-ai.deepseek-v3.2

This is a quantized version of the DeepSeek-V3.2 model by deepseek-ai, optimized for efficient deployment. It is designed for text generation tasks and supports the pipeline tag `text-generation`. The model is based on the original DeepSeek-V3.2 architecture and is available for use in various applications. For more details, refer to the [official repository](https://github.com/DevQuasar/deepseek-ai.DeepSeek-V3.2-GGUF).

z-image-diffusers

Z-Image is the foundation model of the ⚡️-Image family, engineered for good quality, robust generative diversity, broad stylistic coverage, and precise prompt adherence. While Z-Image-Turbo is built for speed, Z-Image is a full-capacity, undistilled transformer designed to be the backbone for creators, researchers, and developers who require the highest level of creative freedom.

z-image-turbo-diffusers

🚀 Z-Image-Turbo – A distilled version of Z-Image that matches or exceeds leading competitors with only 8 NFEs (Number of Function Evaluations). It offers ⚡️sub-second inference latency⚡️ on enterprise-grade H800 GPUs and fits comfortably within 16G VRAM consumer devices. It excels in photorealistic image generation, bilingual text rendering (English & Chinese), and robust instruction adherence.

glm-4.7-flash-derestricted

This model is a quantized version of the original GLM-4.7-Flash-Derestricted model, derived from the base model `koute/GLM-4.7-Flash-Derestricted`. It is designed for restricted use, featuring tags like "derestricted," "uncensored," and "unlimited." The quantized versions (e.g., Q2_K, Q4_K_S, Q6_K) offer varying trade-offs between accuracy and efficiency, with the Q4_K_S and Q6_K variants being recommended for balanced performance. The model is optimized for fast inference and supports multiple quantization schemes, though some advanced quantization options (like IQ4_XS) are not available. It is intended for use in environments with specific constraints or restrictions.

qwen3-tts-1.7b-custom-voice

Qwen3-TTS is a high-quality text-to-speech model supporting custom voice, voice design, and voice cloning.

qwen3-tts-0.6b-custom-voice

Qwen3-TTS is a high-quality text-to-speech model supporting custom voice, voice design, and voice cloning.

fish-speech-s2-pro

Fish Speech S2-Pro is a high-quality text-to-speech model supporting voice cloning via reference audio. Uses a two-stage pipeline: text to semantic tokens (LLaMA-based) then semantic to audio (DAC decoder).

qwen3-asr-1.7b

Qwen3-ASR is an automatic speech recognition model supporting multiple languages and batch inference.

qwen3-asr-0.6b

Qwen3-ASR is an automatic speech recognition model supporting multiple languages and batch inference.

huihui-glm-4.7-flash-abliterated-i1

The model is a quantized version of **huihui-ai/Huihui-GLM-4.7-Flash-abliterated**, optimized for efficiency and deployment. It uses GGUF files with various quantization levels (e.g., IQ1_M, IQ2_XXS, Q4_K_M) and is designed for tasks requiring low-resource deployment. Key features include: - **Base Model**: Huihui-GLM-4.7-Flash-abliterated (unmodified, original model). - **Quantization**: Supports IQ1_M to Q4_K_M, balancing accuracy and efficiency. - **Use Cases**: Suitable for applications needing lightweight inference, such as edge devices or resource-constrained environments. - **Downloads**: Available in GGUF format with varying quality and size (e.g., 0.2GB to 18.2GB). - **Tags**: Abliterated, uncensored, and optimized for specific tasks. This model is a modified version of the original GLM-4.7, tailored for deployment with quantized weights.

mox-small-1-i1

The model, **vanta-research/mox-small-1**, is a small-scale text-generation model optimized for conversational AI tasks. It supports chat, persona research, and chatbot applications. The quantized versions (e.g., i1-Q4_K_M, i1-Q4_K_S) are available for efficient deployment, with the i1-Q4_K_S variant offering the best balance of size, speed, and quality. The model is designed for lightweight inference and is compatible with frameworks like HuggingFace Transformers.

glm-4.7-flash

**GLM-4.7-Flash** is a 30B-A3B MoE (Model Organism Ensemble) model designed for efficient deployment. It outperforms competitors in benchmarks like AIME 25, GPQA, and τ²-Bench, offering strong accuracy while balancing performance and efficiency. Optimized for lightweight use cases, it supports inference via frameworks like vLLM and SGLang, with detailed deployment instructions in the official repository. Ideal for applications requiring high-quality text generation with minimal resource consumption.

qwen3-vl-embedding-8b

**Model Name:** Qwen3-VL-Embedding-8B **Base Model:** Qwen/Qwen3-VL-8B-Instruct **Description:** The **Qwen3-VL-Embedding** and **Qwen3-VL-Reranker** model series are the latest additions to the Qwen family, built upon the recently open-sourced and powerful Qwen3-VL foundation model. Specifically designed for multimodal information retrieval and cross-modal understanding, this suite accepts diverse inputs including text, images, screenshots, and videos, as well as inputs containing a mixture of these modalities. **Key Features:** - Model Type: MultiModal Embedding - Supported Languages: 30+ Languages - Supported Input Modalities: Text, images, screenshots, videos, and arbitrary multimodal combinations (e.g., text + image, text + video) - Number of Parameters: 8B - Context Length: 32k - Embedding Dimension: Up to 4096, supports user-defined output dimensions ranging from 64 to 4096 **Downloads:** - [GGUF Files](https://huggingface.co/Qwen/Qwen3-VL-Embedding-8B) (e.g., `Qwen3-VL-Embedding-8B-Q8_0.gguf`). **Usage:** - Requires `transformers`, `qwen-vl-utils`, and `torch`. - Example: `from scripts.qwen3_vl_embedding import Qwen3VLEmbedder model = Qwen3VLEmbedder(...)` **Citation:** @article{qwen3vlembedding, ...} This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

qwen3-vl-embedding-2b

**Model Name:** Qwen3-VL-Embedding-2B **Base Model:** Qwen/Qwen3-VL-2B-Instruct **Description:** The **Qwen3-VL-Embedding** and **Qwen3-VL-Reranker** model series are the latest additions to the Qwen family, built upon the recently open-sourced and powerful Qwen3-VL foundation model. Specifically designed for multimodal information retrieval and cross-modal understanding, this suite accepts diverse inputs including text, images, screenshots, and videos, as well as inputs containing a mixture of these modalities. **Key Features:** - Model Type: MultiModal Embedding - Supported Languages: 30+ Languages - Supported Input Modalities: Text, images, screenshots, videos, and arbitrary multimodal combinations (e.g., text + image, text + video) - Number of Parameters: 2B - Context Length: 32k - Embedding Dimension: Up to 2048, supports user-defined output dimensions ranging from 64 to 2048 **Downloads:** - [GGUF Files](https://huggingface.co/Qwen/Qwen3-VL-Embedding-2B) (e.g., `Qwen3-VL-Embedding-2B-Q8_0.gguf`). **Usage:** - Requires `transformers`, `qwen-vl-utils`, and `torch`. - Example: `from scripts.qwen3_vl_embedding import Qwen3VLEmbedder model = Qwen3VLEmbedder(...)` **Citation:** @article{qwen3vlembedding, ...} This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

qwen3-vl-reranker-8b

**Model Name:** Qwen3-VL-Reranker-8B **Base Model:** Qwen/Qwen3-VL-Reranker-8B **Description:** A high-performance multimodal reranking model for state-of-the-art cross-modal search. It supports 30+ languages and handles text, images, screenshots, videos, and mixed modalities. With 8B parameters and a 32K context length, it refines retrieval results by combining embedding vectors with precise relevance scores. Optimized for efficiency, it supports quantized versions (e.g., Q8_0, Q4_K_M) and is ideal for applications requiring accurate multimodal content matching. **Key Features:** - **Multimodal**: Text, images, videos, and mixed content. - **Language Support**: 30+ languages. - **Quantization**: Available in Q8_0 (best quality), Q4_K_M (fast, recommended), and lower-precision options. - **Performance**: Outperforms base models in retrieval tasks (e.g., JinaVDR, ViDoRe v3). - **Use Case**: Enhances search pipelines by refining embeddings with precise relevance scores. **Downloads:** - [GGUF Files](https://huggingface.co/mradermacher/Qwen3-VL-Reranker-8B-GGUF) (e.g., `Qwen3-VL-Reranker-8B.Q8_0.gguf`). **Usage:** - Requires `transformers`, `qwen-vl-utils`, and `torch`. - Example: `from scripts.qwen3_vl_reranker import Qwen3VLReranker; model = Qwen3VLReranker(...)` **Citation:** @article{qwen3vlembedding, ...} This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

qwen3-vl-reranker-2b-i1

**Model Name:** Qwen3-VL-Reranker-2B-i1 **Base Model:** Qwen/Qwen3-VL-Reranker-2B **Description:** A high-performance multimodal reranking model for state-of-the-art cross-modal search. It supports 30+ languages and handles text, images, screenshots, videos, and mixed modalities. With 8B parameters and a 32K context length, it refines retrieval results by combining embedding vectors with precise relevance scores. Optimized for efficiency, it supports quantized versions (e.g., Q8_0, Q4_K_M) and is ideal for applications requiring accurate multimodal content matching. **Key Features:** - **Multimodal**: Text, images, videos, and mixed content. - **Language Support**: 30+ languages. - **Quantization**: Available in Q8_0 (best quality), Q4_K_M (fast, recommended), and lower-precision options. - **Performance**: Outperforms base models in retrieval tasks (e.g., JinaVDR, ViDoRe v3). - **Use Case**: Enhances search pipelines by refining embeddings with precise relevance scores. **Downloads:** - [GGUF Files](https://huggingface.co/mradermacher/Qwen3-VL-Reranker-2B-i1-GGUF) (e.g., `Qwen3-VL-Reranker-2B.i1-Q4_K_M.gguf`). **Usage:** - Requires `transformers`, `qwen-vl-utils`, and `torch`. - Example: `from scripts.qwen3_vl_reranker import Qwen3VLReranker; model = Qwen3VLReranker(...)` **Citation:** @article{qwen3vlembedding, ...} This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

liquidai.lfm2-2.6b-transcript

This is a large language model (2.6B parameters) designed for text-generation tasks. It is a quantized version of the original model `LiquidAI/LFM2-2.6B-Transcript`, optimized for efficiency while retaining strong performance. The model is built on the foundation of the base model, with additional optimizations for deployment and use cases like transcription or language modeling. It is trained on large-scale text data and supports multiple languages.

lfm2.5-1.2b-nova-function-calling

The **LFM2.5-1.2B-Nova-Function-Calling-GGUF** is a quantized version of the original model, optimized for efficiency with **Unsloth**. It supports text and multimodal tasks, using different quantization levels (e.g., Q2_K, Q3_K, Q4_K, etc.) to balance performance and memory usage. The model is designed for function calling and is faster than the original version, making it suitable for tasks like code generation, reasoning, and multi-modal input processing.

lfm2.5-audio-1.5b-realtime

LFM2.5-Audio-1.5B is LiquidAI's any-to-any audio foundation model. The 1.2B LFM2.5 backbone plus a FastConformer audio encoder and an LFM2-based audio detokenizer give real-time speech-to-speech with text + audio output interleaved at 12.5 Hz / 24 kHz. This entry runs in S2S (speech-to-speech) mode and is the model the LocalAI realtime API any-to-any path consumes. Switch to ASR, TTS, or chat by picking the sibling gallery entries.

lfm2.5-audio-1.5b-chat

LFM2.5-Audio-1.5B in text-only chat mode. The model runs `generate_sequential` with no audio modality, behaving like a small LFM2 chat model. Pick this entry for tool-calling experiments without the audio overhead.

lfm2.5-audio-1.5b-asr

LFM2.5-Audio-1.5B in ASR mode. System prompt `Perform ASR.` is prepended; output is capitalised and punctuated. Wire this entry as a transcription model on the /v1/audio/transcriptions endpoint.

lfm2.5-audio-1.5b-tts

LFM2.5-Audio-1.5B in TTS mode. Four baked voices: us_male, us_female, uk_male, uk_female — pick the default at load time via `voice:` option, or override per-request via the OpenAI `/v1/audio/speech` `voice` field.

mistral-nemo-instruct-2407-12b-thinking-m-claude-opus-high-reasoning-i1

The model described in this repository is the **Mistral-Nemo-Instruct-2407-12B** (12 billion parameters), a large language model optimized for instruction tuning and high-level reasoning tasks. It is a **quantized version** of the original model, compressed for efficiency while retaining key capabilities. The model is designed to generate human-like text, perform complex reasoning, and support multi-modal tasks, making it suitable for applications requiring strong language understanding and output.

rwkv7-g1c-13.3b

The model is **RWKV7 g1c 13B**, a large language model optimized for efficiency. It is quantized using **Bartowski's calibrationv5 for imatrix** to reduce memory usage while maintaining performance. The base model is **BlinkDL/rwkv7-g1**, and this version is tailored for text-generation tasks. It balances accuracy and efficiency, making it suitable for deployment in various applications.

iquest-coder-v1-40b-instruct-i1

The **IQuest-Coder-V1-40B-Instruct-i1-GGUF** is a quantized version of the original **IQuestLab/IQuest-Coder-V1-40B-Instruct** model, designed for efficient deployment. It is an **instruction-following large language model** with 40 billion parameters, optimized for tasks like code generation and reasoning. **Key Features:** - **Size:** 40B parameters (quantized for efficiency). - **Purpose:** Instruction-based coding and reasoning. - **Format:** GGUF (supports multi-part files). - **Quantization:** Uses advanced techniques (e.g., IQ3_M, Q4_K_M) for balance between performance and quality. **Available Quantizations:** - Optimized for speed and size: **i1-Q4_K_M** (recommended). - Lower-quality options for trade-off between size/quality. **Note:** This is a **quantized version** of the original model, but the base model (IQuestLab/IQuest-Coder-V1-40B-Instruct) is the official source. For full functionality, use the unquantized version or verify compatibility with your deployment tools.

onerec-8b

The model `mradermacher/OneRec-8B-GGUF` is a quantized version of the base model `OpenOneRec/OneRec-8B`, a large language model designed for tasks like recommendations or content generation. It is optimized for efficiency with various quantization schemes (e.g., Q2_K, Q4_K, Q8_0) and available in multiple sizes (3.5–9.0 GB). The model uses the GGUF format and is licensed under Apache-2.0. Key features include: - **Base Model**: `OpenOneRec/OneRec-8B` (a pre-trained language model for recommendations). - **Quantization**: Supports multiple quantized variants (Q2_K, Q3_K, Q4_K, etc.), with the best quality for `Q4_K_S` and `Q8_0`. - **Sizes**: Available in sizes ranging from 3.5 GB (Q2_K) to 9.0 GB (Q8_0), with faster speeds for lower-bit quantized versions. - **Usage**: Compatible with GGUF files, suitable for deployment in applications requiring efficient model inference. - **Licence**: Apache-2.0, available at [https://huggingface.co/OpenOneRec/OneRec-8B/blob/main/LICENSE](https://huggingface.co/OpenOneRec/OneRec-8B/blob/main/LICENSE). For detailed specifications, refer to the [model page](https://hf.tst.eu/model#OneRec-8B-GGUF).

minimax-m2.1-i1

The model **MiniMax-M2.1** (base model: *MiniMaxAI/MiniMax-M2.1*) is a large language model quantized for efficient deployment. It is optimized for speed and memory usage, with quantized versions available in various formats (e.g., GGUF) for different performance trade-offs. The quantization is done by the user, and the model is licensed under the *modified-mit* license. Key features: - **Quantized versions**: Includes low-precision (IQ1, IQ2, Q2_K, etc.) and high-precision (Q4_K_M, Q6_K) options. - **Usage**: Requires GGUF files; see [TheBloke's documentation](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for details on integration. - **License**: Modified MIT (see [license link](https://github.com/MiniMax-AI/MiniMax-M2.1/blob/main/LICENSE)). For gallery use, emphasize its quantized variants, performance trade-offs, and licensing.

tildeopen-30b-instruct-lv-i1

The **TildeOpen-30B-Instruct-LV-i1-GGUF** is a quantized version of the base model **pazars/TildeOpen-30B-Instruct-LV**, optimized for deployment. It is an instruct-based language model trained on diverse datasets, supporting multiple languages (en, de, fr, pl, ru, it, pt, cs, nl, es, fi, tr, hu, bg, uk, bs, hr, da, et, lt, ro, sk, sl, sv, no, lv, sr, sq, mk, is, mt, ga). Licensed under CC-BY-4.0, it uses the Transformers library and is designed for efficient inference. The quantized version (with imatrix format) is tailored for deployment on devices with limited resources, while the base model remains the original, high-quality version.

allenai_olmo-3.1-32b-think

The **Olmo-3.1-32B-Think** model is a large language model (LLM) optimized for efficient inference using quantized versions. It is a quantized version of the original **allenai/Olmo-3.1-32B-Think** model, developed by **bartowski** using the **imatrix** quantization method. ### Key Features: - **Base Model**: `allenai/Olmo-3.1-32B-Think` (unquantized version). - **Quantized Versions**: Available in multiple formats (e.g., `Q6_K_L`, `Q4_1`, `bf16`) with varying precision (e.g., Q8_0, Q6_K_L, Q5_K_M). These are derived from the original model using the **imatrix calibration dataset**. - **Performance**: Optimized for low-memory usage and efficient inference on GPUs/CPUs. Recommended quantization types include `Q6_K_L` (near-perfect quality) or `Q4_K_M` (default, balanced performance). - **Downloads**: Available via Hugging Face CLI. Split into multiple files if needed for large models. - **License**: Apache-2.0. ### Recommended Quantization: - Use `Q6_K_L` for highest quality (near-perfect performance). - Use `Q4_K_M` for balanced performance and size. - Avoid lower-quality options (e.g., `Q3_K_S`) unless specific hardware constraints apply. This model is ideal for deploying on GPUs/CPUs with limited memory, leveraging efficient quantization for practical use cases.

qwen3-coder-30b-a3b-instruct-rtpurbo-i1

The model in question is a quantized version of the original **Qwen3-Coder** large language model, specifically tailored for code generation. The base model, **RTP-LLM/Qwen3-Coder-30B-A3B-Instruct-RTPurbo**, is a 30B-parameter variant optimized for instruction-following and code-related tasks. It employs the **A3B attention mechanism** and is trained on diverse data to excel in programming and logical reasoning. The current repository provides a quantized (compressed) version of this model, which is suitable for deployment on hardware with limited memory but loses some precision compared to the original. For a high-fidelity version, the unquantized base model is recommended.

glm-4.5v-i1

The model in question is a **quantized version** of the **GLM-4.5V** large language model, originally developed by **zai-org**. This repository provides multiple quantized variants of the model, optimized for different trade-offs between size, speed, and quality. The base model, **GLM-4.5V**, is a multilingual (Chinese/English) large language model, and this quantized version is designed for efficient inference on hardware with limited memory. Key features include: - **Quantization options**: IQ2_M, Q2_K, Q4_K_M, IQ3_M, IQ4_XS, etc., with sizes ranging from 43 GB to 96 GB. - **Performance**: Optimized for inference, with some variants (e.g., Q4_K_M) balancing speed and quality. - **Vision support**: The model is a vision model, with mmproj files available in the static repository. - **License**: MIT-licensed. This quantized version is ideal for applications requiring compact, efficient models while retaining most of the original capabilities of the base GLM-4.5V.

vibevoice

pocket-tts

qwen3-vl-30b-a3b-instruct

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date. This generation delivers comprehensive upgrades across the board: superior text understanding & generation, deeper visual perception & reasoning, extended context length, enhanced spatial and video dynamics comprehension, and stronger agent interaction capabilities. Available in Dense and MoE architectures that scale from edge to cloud, with Instruct and reasoning‑enhanced Thinking editions for flexible, on-demand deployment. #### Key Enhancements: * **Visual Agent**: Operates PC/mobile GUIs—recognizes elements, understands functions, invokes tools, completes tasks. * **Visual Coding Boost**: Generates Draw.io/HTML/CSS/JS from images/videos. * **Advanced Spatial Perception**: Judges object positions, viewpoints, and occlusions; provides stronger 2D grounding and enables 3D grounding for spatial reasoning and embodied AI. * **Long Context & Video Understanding**: Native 256K context, expandable to 1M; handles books and hours-long video with full recall and second-level indexing. * **Enhanced Multimodal Reasoning**: Excels in STEM/Math—causal analysis and logical, evidence-based answers. * **Upgraded Visual Recognition**: Broader, higher-quality pretraining is able to “recognize everything”—celebrities, anime, products, landmarks, flora/fauna, etc. * **Expanded OCR**: Supports 32 languages (up from 19); robust in low light, blur, and tilt; better with rare/ancient characters and jargon; improved long-document structure parsing. * **Text Understanding on par with pure LLMs**: Seamless text–vision fusion for lossless, unified comprehension. #### Model Architecture Updates: 1. **Interleaved-MRoPE**: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning. 2. **DeepStack**: Fuses multi‑level ViT features to capture fine-grained details and sharpen image–text alignment. 3. **Text–Timestamp Alignment:** Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling. This is the weight repository for Qwen3-VL-30B-A3B-Instruct.

qwen3-vl-30b-a3b-thinking

Qwen3-VL-30B-A3B-Thinking is a 30B parameter model that is thinking.

qwen3-vl-4b-instruct

Qwen3-VL-4B-Instruct is the 4B parameter model of the Qwen3-VL series.

qwen3-vl-32b-instruct

Qwen3-VL-32B-Instruct is the 32B parameter model of the Qwen3-VL series.

qwen3-vl-4b-thinking

Qwen3-VL-4B-Thinking is the 4B parameter model of the Qwen3-VL series that is thinking.

qwen3-vl-2b-thinking

Qwen3-VL-2B-Thinking is the 2B parameter model of the Qwen3-VL series that is thinking.

qwen3-vl-2b-instruct

Qwen3-VL-2B-Instruct is the 2B parameter model of the Qwen3-VL series.

huihui-qwen3-vl-30b-a3b-instruct-abliterated

These are quantizations of the model Huihui-Qwen3-VL-30B-A3B-Instruct-abliterated-GGUF

qwen3-vl-8b-instruct

Qwen3-VL-8B-Instruct is the 8B parameter model of the Qwen3-VL series. Uses recommended default parameters according to Unsloth documentation for Qwen 3 VL.

qwen3-vl-8b-thinking

Qwen3-VL-8B-Thinking is the 8B parameter model of the Qwen3-VL series that is thinking. Uses recommended default parameters according to Unsloth documentation for Qwen 3 VL.

qwen3-omni-30b-a3b-instruct

Qwen3-Omni is the natively end-to-end multilingual omni-modal foundation model. It processes text, images, audio, and video, and delivers real-time streaming responses in both text and natural speech. This GGUF build runs on llama.cpp with the bundled mmproj for multimodal inputs.

qwen3-omni-30b-a3b-thinking

Qwen3-Omni-30B-A3B-Thinking is the reasoning-enhanced variant of Qwen3-Omni, a natively end-to-end multilingual omni-modal foundation model. It processes text, images, and audio and produces chain-of-thought reasoning before the final answer. This GGUF build runs on llama.cpp with the bundled mmproj.

qwen3-asr-0.6b

Qwen3-ASR is an automatic speech recognition model supporting multiple languages and batch inference.

qwen3-asr-1.7b

Qwen3-ASR is an automatic speech recognition model supporting multiple languages and batch inference.

glm-ocr

GLM-OCR is a vision-language model specialized for optical character recognition and document understanding, built on the GLM architecture. This GGUF build runs on llama.cpp with the bundled mmproj.

deepseek-ocr

DeepSeek-OCR is a vision-language model from DeepSeek AI specialized for optical character recognition and document understanding. This GGUF build runs on llama.cpp with the bundled mmproj.

ai21labs_ai21-jamba-reasoning-3b

AI21’s Jamba Reasoning 3B is a top-performing reasoning model that packs leading scores on intelligence benchmarks and highly-efficient processing into a compact 3B build. The hybrid design combines Transformer attention with Mamba (a state-space model). Mamba layers are more efficient for sequence processing, while attention layers capture complex dependencies. This mix reduces memory overhead, improves throughput, and makes the model run smoothly on laptops, GPUs, and even mobile devices, while maintainig impressive quality.

ibm-granite_granite-4.0-h-small

Granite-4.0-H-Small is a 32B parameter long-context instruct model finetuned from Granite-4.0-H-Small-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

ibm-granite_granite-4.0-h-tiny

Granite-4.0-H-Tiny is a 7B parameter long-context instruct model finetuned from Granite-4.0-H-Tiny-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

ibm-granite_granite-4.0-h-micro

Granite-4.0-H-Micro is a 3B parameter long-context instruct model finetuned from Granite-4.0-H-Micro-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

ibm-granite_granite-4.0-micro

Granite-4.0-Micro is a 3B parameter long-context instruct model finetuned from Granite-4.0-Micro-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

baidu_ernie-4.5-21b-a3b-thinking

Over the past three months, we have continued to scale the thinking capability of ERNIE-4.5-21B-A3B, improving both the quality and depth of reasoning, thereby advancing the competitiveness of ERNIE lightweight models in complex reasoning tasks. We are pleased to introduce ERNIE-4.5-21B-A3B-Thinking, featuring the following key enhancements: Significantly improved performance on reasoning tasks, including logical reasoning, mathematics, science, coding, text generation, and academic benchmarks that typically require human expertise. Efficient tool usage capabilities. Enhanced 128K long-context understanding capabilities. Note: This version has an increased thinking length. We strongly recommend its use in highly complex reasoning tasks. ERNIE-4.5-21B-A3B-Thinking is a text MoE post-trained model, with 21B total parameters and 3B activated parameters for each token.

aurore-reveil_koto-small-7b-it

Koto-Small-7B-IT is an instruct-tuned version of Koto-Small-7B-PT, which was trained on MiMo-7B-Base for almost a billion tokens of creative-writing data. This model is meant for roleplaying and instruct usecases.

opengvlab_internvl3_5-30b-a3b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-30b-a3b-q8_0

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-14b-q8_0

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-14b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-8b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-8b-q8_0

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-4b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-4b-q8_0

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

opengvlab_internvl3_5-2b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

lfm2-vl-450m

LFM2‑VL is Liquid AI's first series of multimodal models, designed to process text and images with variable resolutions. Built on the LFM2 backbone, it is optimized for low-latency and edge AI applications. We're releasing the weights of two post-trained checkpoints with 450M (for highly constrained devices) and 1.6B (more capable yet still lightweight) parameters. 2× faster inference speed on GPUs compared to existing VLMs while maintaining competitive accuracy Flexible architecture with user-tunable speed-quality tradeoffs at inference time Native resolution processing up to 512×512 with intelligent patch-based handling for larger images, avoiding upscaling and distortion

lfm2-vl-1.6b

LFM2‑VL is Liquid AI's first series of multimodal models, designed to process text and images with variable resolutions. Built on the LFM2 backbone, it is optimized for low-latency and edge AI applications. We're releasing the weights of two post-trained checkpoints with 450M (for highly constrained devices) and 1.6B (more capable yet still lightweight) parameters. 2× faster inference speed on GPUs compared to existing VLMs while maintaining competitive accuracy Flexible architecture with user-tunable speed-quality tradeoffs at inference time Native resolution processing up to 512×512 with intelligent patch-based handling for larger images, avoiding upscaling and distortion

lfm2-1.2b

LFM2-1.2B is a hybrid liquid model designed for edge AI and on-device deployment, offering fast inference and multilingual support across 8 languages. It's optimized for agentic tasks, data extraction, and multi-turn conversations with efficient CPU/GPU/NPU compatibility.

liquidai_lfm2-350m-extract